Category: Hardware

-

BYOD – Bring your own display (to the booth and elsewhere)

I think being completely paperless (except for Christmas cards) is just fab for a great number of reasons. However, there are two aspects of working with just a tiny laptop that I have never quite come to terms with: 1. The space available on a small laptop screen is rather limited. Maybe this is just…

-

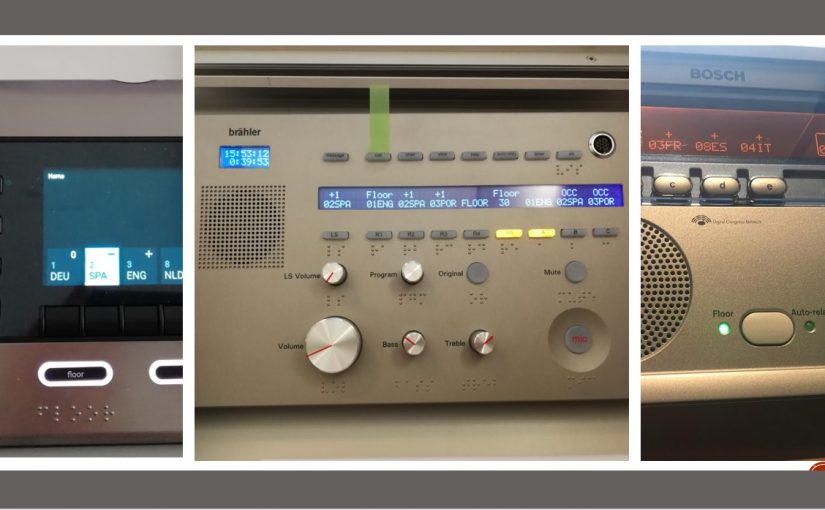

Hard consoles – Quick guide to old normal relais interpreting

This blog post is intended for all those students who started their studies of conference interpreting right after the outbreak of Covid-19. More than one year into the pandemic, many of them haven’t entered a physical booth or put their hands on a hard console yet. In order to not leave them completely unprepared, I…

-

Will 3D audio make remote simultaneous interpreting a pleasure?

Now THAT’S what I want: Virtual Reality for my ears! Apparently, simultaneous interpreters are not the only ones suffering from Zoom fatigue, i.e. the confusion and inability to physically locate a speaker using our ears can lead to a condition that’s sometimes referred to as “Zoom Fatigue”. But it looks like there is still reason…

-

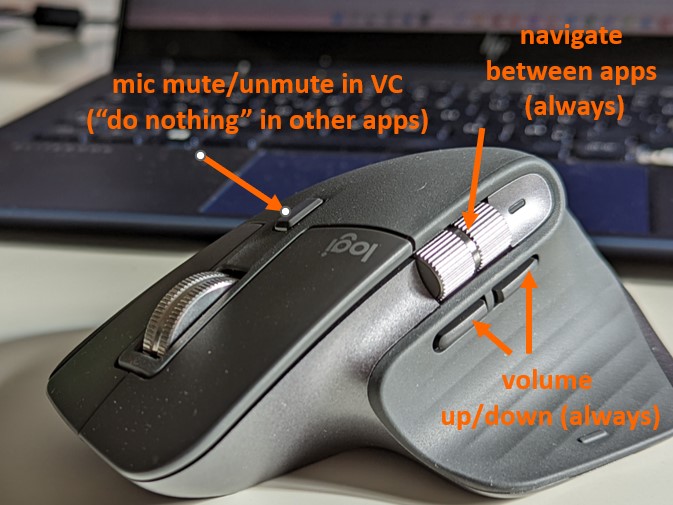

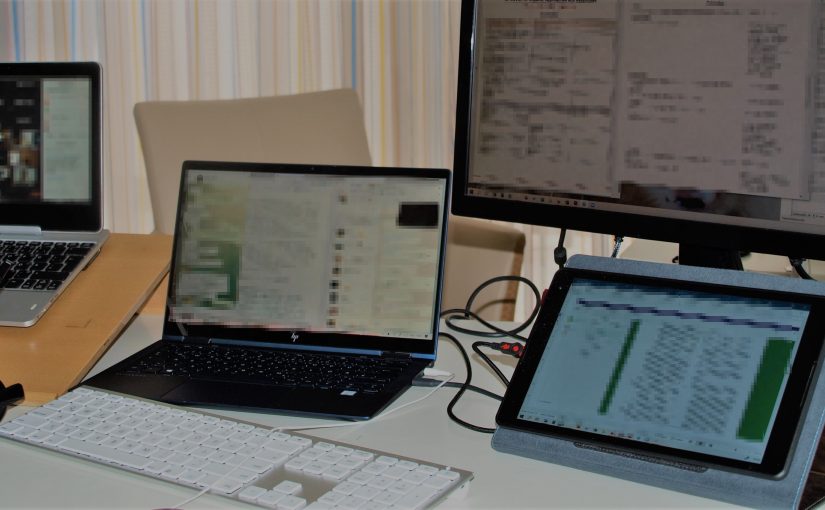

You can never have too many screens, can you?

I don’t know about you, but I sometimes struggle, when using my laptop in the booth, to squeeze the agenda, list of participants, glossary, dictionary, web browser and meeting documents/presentations onto one screen. Not to mention email, messenger or shared notepad when working in separate booths in times of COVID-19 … Or even the soft…

-

Simultaneous interpreting in the time of coronavirus – Boothmates behind glass walls

Yesterday was one of the rare occasions where conference interpreters were still brought to the client’s premises for a multilingual meeting. Participants from abroad were connected via a web meeting platform, while the few people who were on-site anyway were sitting at tables 2 meters apart from each other. But what about the interpreters, who…

-

Remote Simultaneous Interpreting … muss das denn sein – und geht das überhaupt?!

In einem von AIIC und VKD gemeinsam organisierten Workshop unter der Regie von Klaus Ziegler hatten wir Mitte Mai in Hamburg die Gelegenheit, diese Fragen ausführlich zu ergründen. In einem Coworkingspace wurde in einer Gruppe organisierender Dolmetscher zwei Tage lang gelernt, diskutiert und in einem Dolmetschhub das Remote Simultaneous Interpreting über die cloud-basierte Simultandolmetsch-Software Kudo…

-

Paperless Preparation at International Organisations – an Interview with Maha El-Metwally

Maha El-Metwally has recently written a master’s thesis at the University of Geneva on preparation for conferences of international organisations using tablets. She is a freelance conference interpreter for Arabic A, English B, French and Dutch C domiciled in Birmingham. How come you know so much about the current preparation practice of conference interpreters at so…

-

Can computers outperform human interpreters?

Unlike many people in the translation industry, I like to imagine that one day computers will be able to interpret simultaneously between two languages just as well as or better than human interpreters do, what with artificial neuronal neurons and neural networks’ pattern-based learning. After all, once hardware capacity allows for it, an artificial neural…

-

Simultaneous interpreting with VR headset | Dolmetschen unter der Virtual-Reality-Brille | Interpretación simultanea con gafas VR

+++ for English see below +++ para español, aun más abajo +++ 2016 wurde mir zum Jahresausklang eine Dolmetscherfahrung der ganz besonderen Art zuteil: Dolmetschen mit Virtual-Reality-Brille. Sebastiano Gigliobianco hat im Rahmen seiner Masterarbeit am SDI München drei Remote-Interpreting-Szenarien in Fallstudien durchgetestet und ich durfte in den Räumen von PCS in Düsseldorf als Probandin dabei…

-

Datensicherung für Mutter und Kinder | family-friendly data backup

+++ for English, see below +++ Was ich selbst in über 20 Jahren nicht fertiggebracht habe, schafft mein Kind schon vor dem Erreichen der digitalen Volljährigkeit (gleich Inhaberschaft eines eigenen Whatsapp-Kontos) – den totalen Datenverlust. Zwar in diesem Fall nur in Form aller kostbaren Fotos auf meinem ausrangierten Handy, aber immerhin. So langsam wird klar:…

-

Dictation Software instead of Term Extraction? | Diktiersoftware als Termextraktion für Dolmetscher?

+++ for English see below +++ Als neulich mein Arzt bei unserem Beratungsgespräch munter seine Gedanken dem Computer diktierte, anstatt zu tippen, kam mir die Frage in den Sinn: “Warum mache ich das eigentlich nicht?” Es folgte eine kurze Fachsimpelei zum Thema Diktierprogramme, und kaum zu Hause, musste ich das natürlich auch gleich ausprobieren. Das…

-

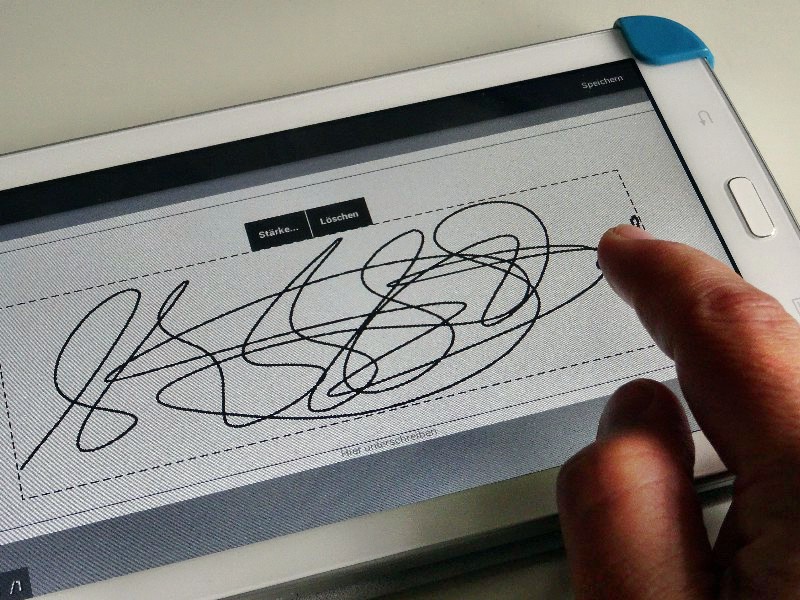

Verträge auf dem Smartphone oder Tablet unterschreiben +++ Signing contracts on your smartphone or tablet

+++ For English see below +++ Man kennt es ja: Gerade liegt man unter Palmen, da schneit per Mail der Vertrag für den nächsten Auftrag hinein und will sofort unterschrieben zurückgeschickt werden. Und nun? Zur Rezeption laufen, Vertrag ausdrucken lassen (supervertraulich) und unterschrieben zurückfaxen? Natürlich nicht. Denn zu meiner übergroßen Freude habe ich in meinem…

-

Mit dem Tablet in die Dolmetschkabine? – The ipad Interpreter von und mit Alex Drechsel

Dass ein Tablet leichter, kleiner und handlicher ist und zudem eine längere Akkulaufzeit hat, sehe ich ein. Ich persönlich trage jedoch lieber 400 g mehr mit mir herum und habe einen richtigen Rechner (= mein komplettes Büro nebst Datenbestand) dabei, der mir überall ein vollwertiges Office-Paket, eine “große” Tastatur und die Möglichkeit bietet, so viele…

-

Touchscreen als Notizblock

Vielleicht hat der eine oder andere von Euch irgendeine Form von berührungsempfindlichem Bildschirm vom Christkind bekommen? Ich bin ein großer Fan von Touchscreens in der Kabine, weil ich einem Kleinkind gleich mit den Fingern (Digitus!) einfach nur auf das zeigen muss, was ich sehen möchte, so dass ich selbst während des Simultandolmetschens phänomenal intuitiv meine…