Tag: simultaneous interpreter

-

Great Piece of Research on Terminology Assistance for Conference Interpreters

-

Can computers outperform human interpreters?

Unlike many people in the translation industry, I like to imagine that one day computers will be able to interpret simultaneously between two languages just as well as or better than human interpreters do, what with artificial neuronal neurons and neural networks’ pattern-based learning. After all, once hardware capacity allows for it, an artificial neural…

-

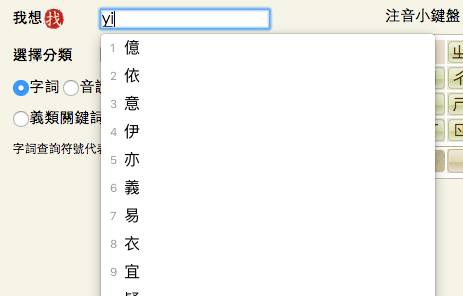

Hello from the other side – Chinese and Terminology Tools. A guest article by Felix Brender 王哲謙

As Mandarin Chinese interpreters, we understand that we are somewhat rare beings. After all, we work with a language which, despite being a UN language, is not one you’d encounter regularly. We wouldn’t expect colleagues working with other, more frequently used languages to know about the peculiarities of Mandarin. This applies not least to terminology…

-

Mein Gehirn beim Simultandolmetschen| My brain interpreting simultaneously

+++ for English, see below +++ Für gewöhnlich fragen wir uns ja eher, was gerade um Himmels Willen im Kopf des Redners vorgeht, den wir gerade dolmetschen. Unsere Kollegin Eliza Kalderon jedoch stellt in ihrer Doktorarbeit die umgekehrte Frage: Was geht eigentlich in den Dolmetscherköpfen beim Simultandolmetschen vor? Zu diesem Zweck steckt sie Probanden in…